Agentic Wiki

Modern AI chat interfaces boast massive context windows, yet they remain fundamentally constrained by one critical flaw: they are ephemeral.

You can spend hours curating inputs, extracting insights, and refining ideas within a session—but the moment that session ends, the structure of that knowledge collapses. What remains is not a system, but a transcript.

Return a month later, and you don’t continue forward, you reconstruct from fragments.

This makes chat interfaces powerful for isolated problem-solving, but fundamentally inadequate for long-term, compounding research. There is no continuity. No accumulation. No persistent state.

Node Leaf replaces this paradigm entirely.

https://github.com/nathanfx330/node-leaf/

It introduces a new architecture: the Agentic Wiki.

Instead of producing disposable outputs, AI agents maintain a persistent, evolving body of knowledge—stored locally as plain, portable Markdown (.md) files.

This is not output.

It is memory.

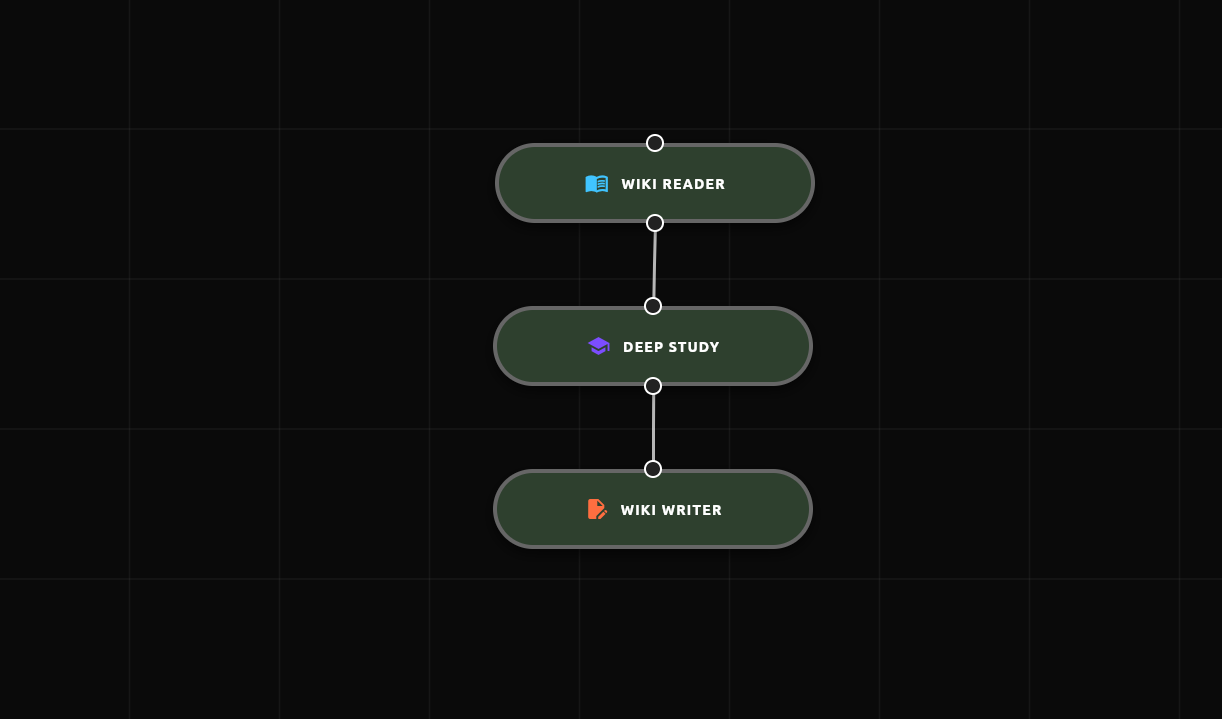

The Continuous Synthesis Loop

At the core of the Agentic Wiki is a simple but transformative principle:

Separate raw information from synthesized knowledge.

- Source of Truth: Immutable Redleaf documents

- Working Knowledge: Continuously rewritten Markdown pages

This separation enables a perpetual synthesis loop—one that behaves less like a chatbot and more like an autonomous researcher:

- Read Current State: The Wiki Reader loads the current draft of a page.

- Identify Gaps: A Deep Study node evaluates the draft and retrieves missing material from the Redleaf corpus.

- Rewrite and Persist: The Wiki Writer integrates new findings, restructures the document, and writes it back to disk.

This loop is not episodic—it is continuous. The system does not forget. It does not reset. It improves.

Agentic Wiki Node-chain

Epistemological Integrity

Real research is not linear. Sources conflict. Interpretations shift. Understanding evolves.

Most systems flatten this process, overwriting old conclusions with new ones, erasing the reasoning that led there. The Agentic Wiki does the opposite. Contradictions are preserved explicitly:

“Earlier analysis suggested X; however, newly examined [Doc Y] indicates Z.”

Every claim remains anchored to its source. To enforce this integrity, every write operation is versioned locally. Before modification, the system creates a timestamped backup in a hidden .history directory.

It behaves like version control—without introducing friction.

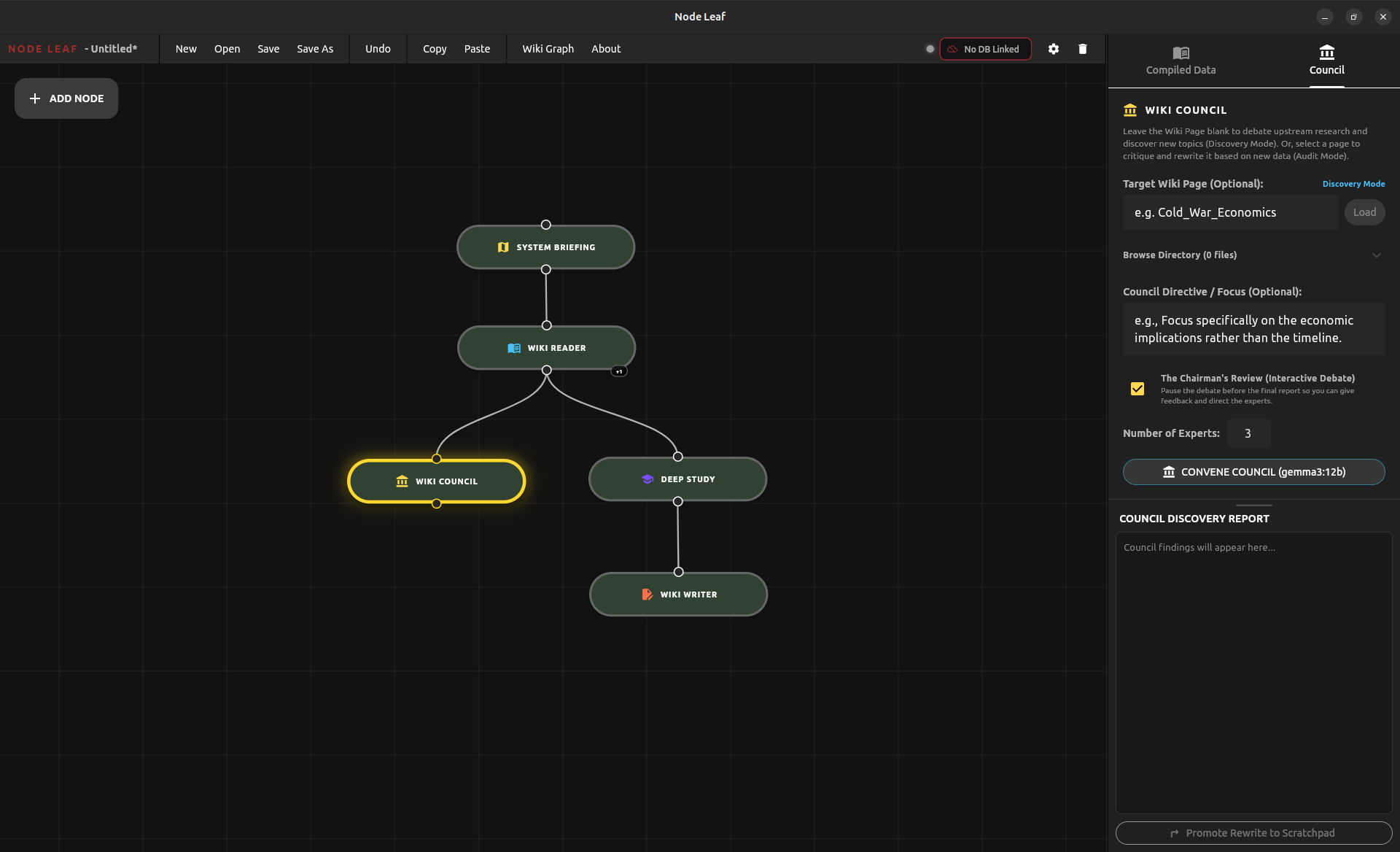

The Wiki Council: Steering the Knowledge Frontier

Deep research naturally converges. Once a topic is well-developed, exploration tends to narrow. The Agentic Wiki counteracts this with the Wiki Council—a simulated Mixture of Experts (MoE) designed to audit your Wiki against the broader Redleaf knowledge graph and expose blind spots.

But autonomous agents introduce a hazard: unguided compute waste.

Letting a swarm of experts loose is a roll of the dice. Often, the resulting conversation just misses the mark. You spin up the agents, and they wander down a path you simply don’t care about. Rerolling doesn’t fix it; they just miss in a different way. Meanwhile, your local GPU is pinned at 100%, wasting time and heat on a useless output.

To solve this, the Wiki Council is not a black box. It is a steerable, Human-in-the-Loop (HITL) system:

- The Directive: Before the council convenes, you issue a strict focus (e.g., “Focus entirely on the economic implications”). The agents must operate within this bounding box, eliminating wasted compute.

- Context & Mapping: Core themes are distilled from the Wiki and queried against the Redleaf corpus.

- Structured Debate: A rotating set of expert personas (Visionary, Skeptic, Archivist) interrogate the gaps, strictly adhering to your Directive.

- The Chairman’s Review: The debate pauses mid-stream. You act as “The Chairman,” reading the live transcript and injecting feedback. If the conversation is drifting, you rein it in. The experts respond directly to your critique before proceeding.

- Synthesis: A Director persona consolidates the aligned discussion into a structured Audit Report.

The output is an actionable, prioritized map of missing connections and suggested new .md files. It functions as an autonomous, steerable “What’s Next?” engine.

Wiki Council

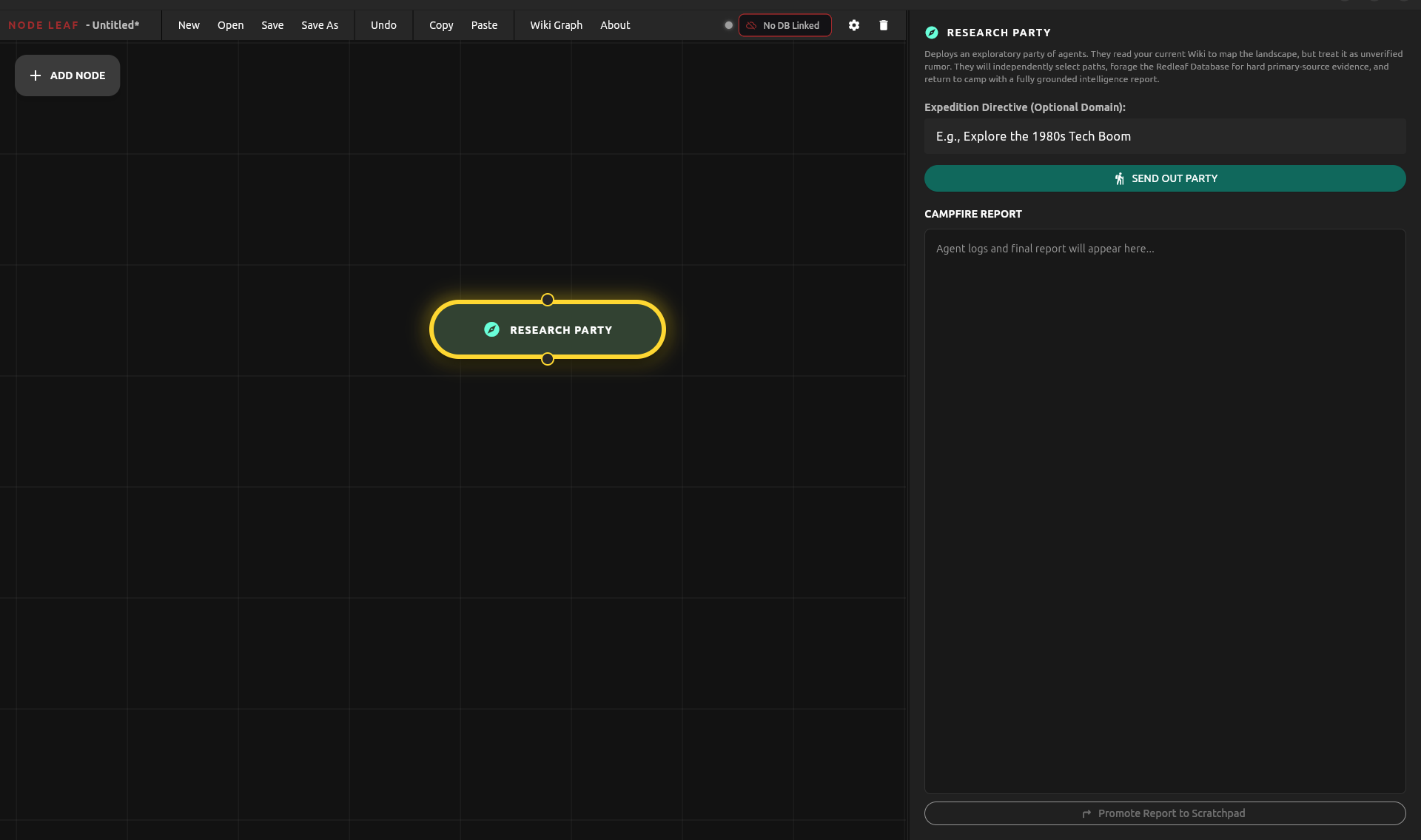

The Agentic “I’m Feeling Lucky”: The Research Party

For decades, search engines offered a blunt instrument for serendipity: the “I’m Feeling Lucky” button. It bypassed the index and dropped you directly at a destination.

In a continuous knowledge system, serendipity requires the Research Party.

If the Wiki Council audits existing territory, the Research Party charts the unknown. It is engineered to force intellectual breakthroughs through system signal novelty.

You give it a broad thematic directive (e.g., “Explore the 1980s Tech Boom”):

- The Scout Reads the Map: It analyzes your current Wiki network. Crucially, it treats your existing knowledge as mere “unverified rumors.”

- Hunting for Novelty: It compares what you think you know against the raw Redleaf database, seeking primary source data not yet synthesized into your Wiki.

- The Forager Extracts: It pulls only hard, cited facts (

[Doc X]) from the unmapped corners of your corpus. - The Chronicler Synthesizes: It returns with a “Campfire Report”—a grounded intelligence briefing that introduces entirely new conceptual nodes to your network.

This is not random search. It is an algorithmic collision. By contrasting established mental models against latent signals hidden deep within your archives, the Research Party manufactures serendipity.

Research Party Node

From Notes to Knowledge Systems

Because all outputs are plain Markdown, the system remains fully portable. But portability is not the innovation.

Accumulation is.

You are no longer interacting with isolated documents. You are orchestrating a distributed system of agents, each responsible for maintaining and evolving a segment of a larger knowledge structure.

Over time, these agents refine arguments, track contradictions, and expand conceptual coverage. Given sufficient time and data, they produce a structured, continuously evolving manuscript.

Beyond Chat

This is not about making research faster.

It is about making knowledge persistent.

You are no longer prompting an AI for answers.

You are maintaining a system that remembers, revises, and compounds understanding over time.

The shift is subtle—but fundamental:

From stateless interaction

to

continuous cognition.