Human RAG: The Forgotten Half of Retrieval

The AI industry is currently fixated on RAG (Retrieval-Augmented Generation). The premise is straightforward: an LLM is only as effective as the context it receives, so we augment prompts with relevant information retrieved from vector databases.

This approach works well, for machines.

But it quietly overlooks something critical: the human in the loop.

Before RAG became a buzzword, we called this process something much simpler, search. But search has fundamentally degraded for humans. We’ve watched the death of powerful, standalone search utilities (like the old Google Search Appliance hardware) in favor of walled-garden cloud storage like Google Drive, where finding a specific document feels like a roll of the dice.

Search has become worse for humans, but paradoxically, better for AI. We’ve engineered incredibly sophisticated pipelines to retrieve and feed context into language models, while leaving the human user with almost none of that context themselves.

This is the gap I set out to address with the Redleaf Knowledge Engine and its visual counterpart, Node Leaf.

I think of it as Symmetrical RAG.

It is a system where retrieval serves the human mind just as powerfully as it serves the algorithmic one. In Redleaf, retrieval is transparent. When a document is processed, it doesn’t disappear into a vector store for later use. It is analyzed, decomposed, and surfaced. People, organizations, and relationships are extracted and presented directly in the Discovery interface. You can trace the exact sentences where entities intersect. The system gives you context before anything is ever passed to a model.

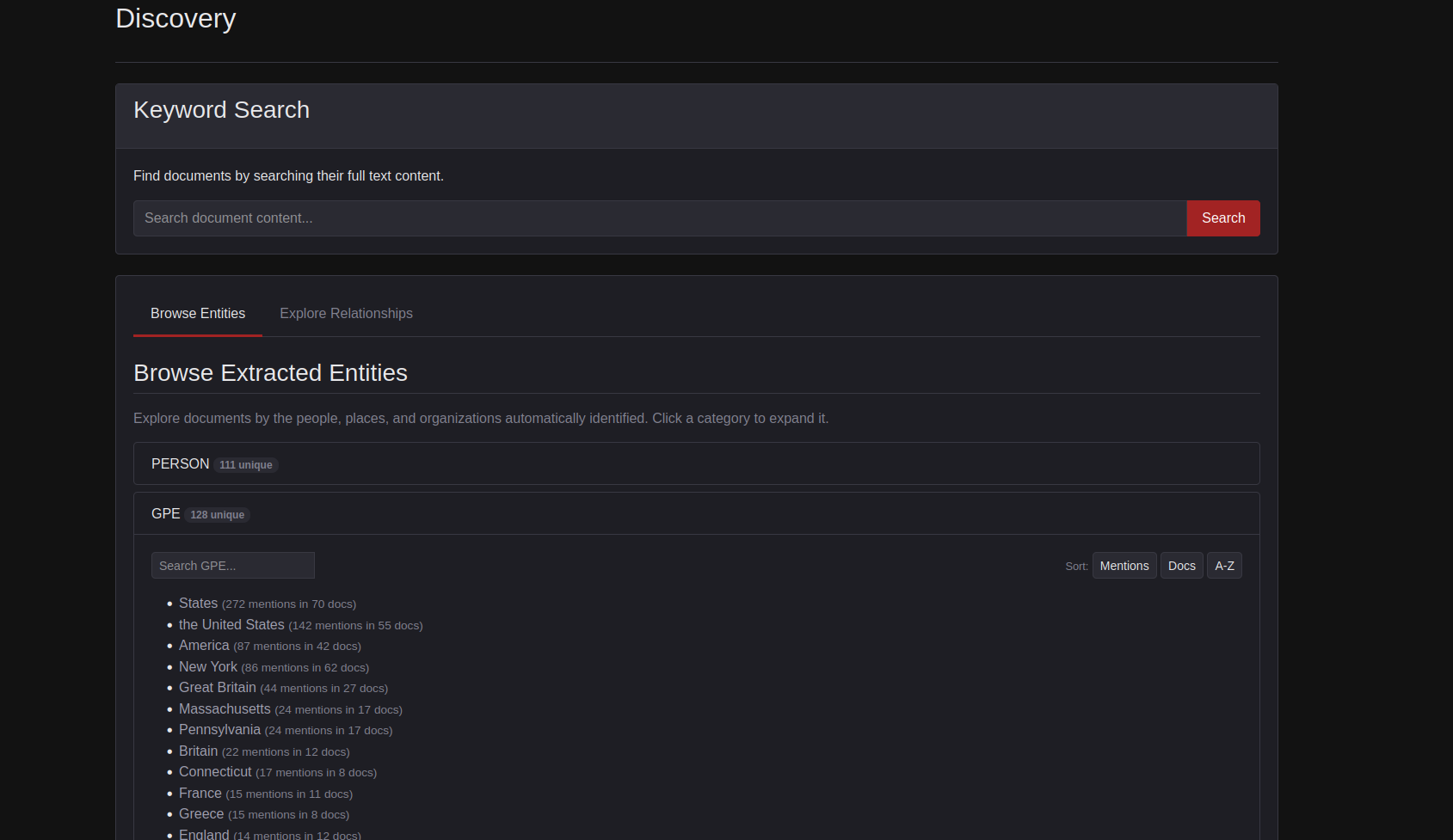

Below are screenshots of a Redleaf index of the Federalist Papers.

The Federalist Papers have long served as a benchmark corpus in Unix-style text processing and are commonly associated with early grep-era workflows. They’ve been digitized in the public domain since the dawn of computing, and their scope makes them an incredible test bench for exercising Redleaf.

Redleaf surfaces connections. spaCy identifies people, places, and things automatically.

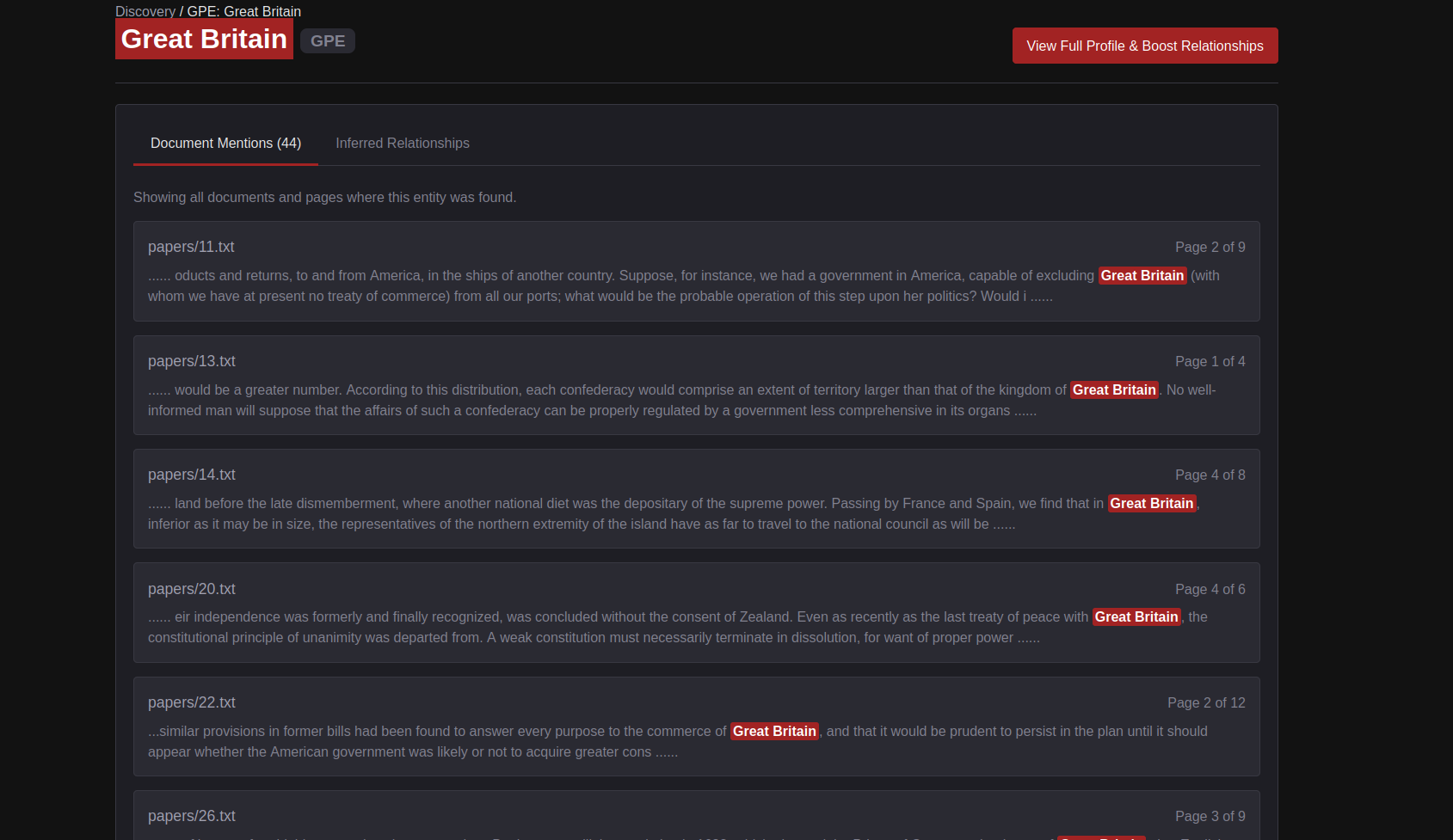

Here we are choosing Great Britain.

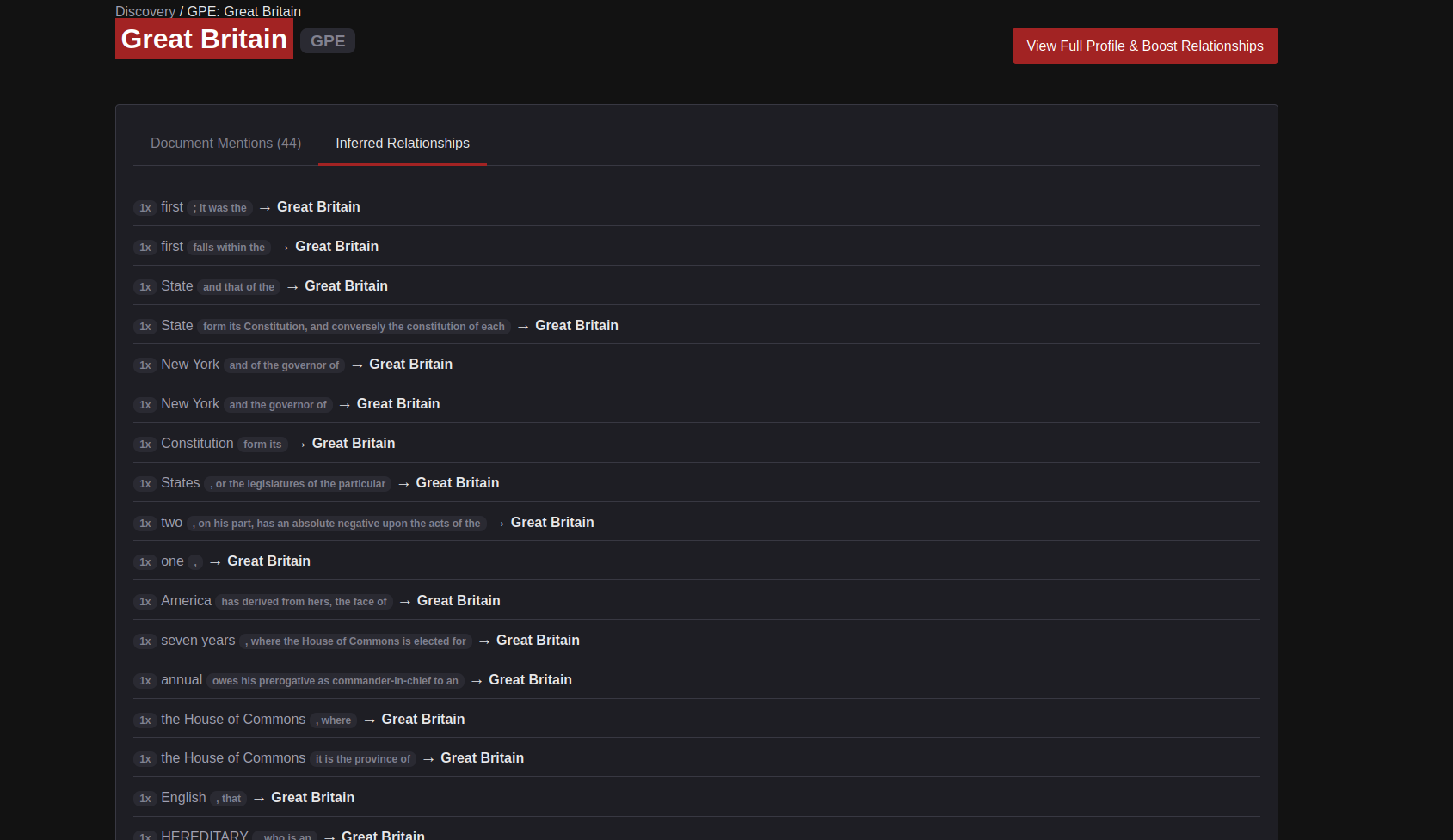

In the indexing process, we have already identified patterns of words between spaCy entities. This is found in the Inferred Relationships.

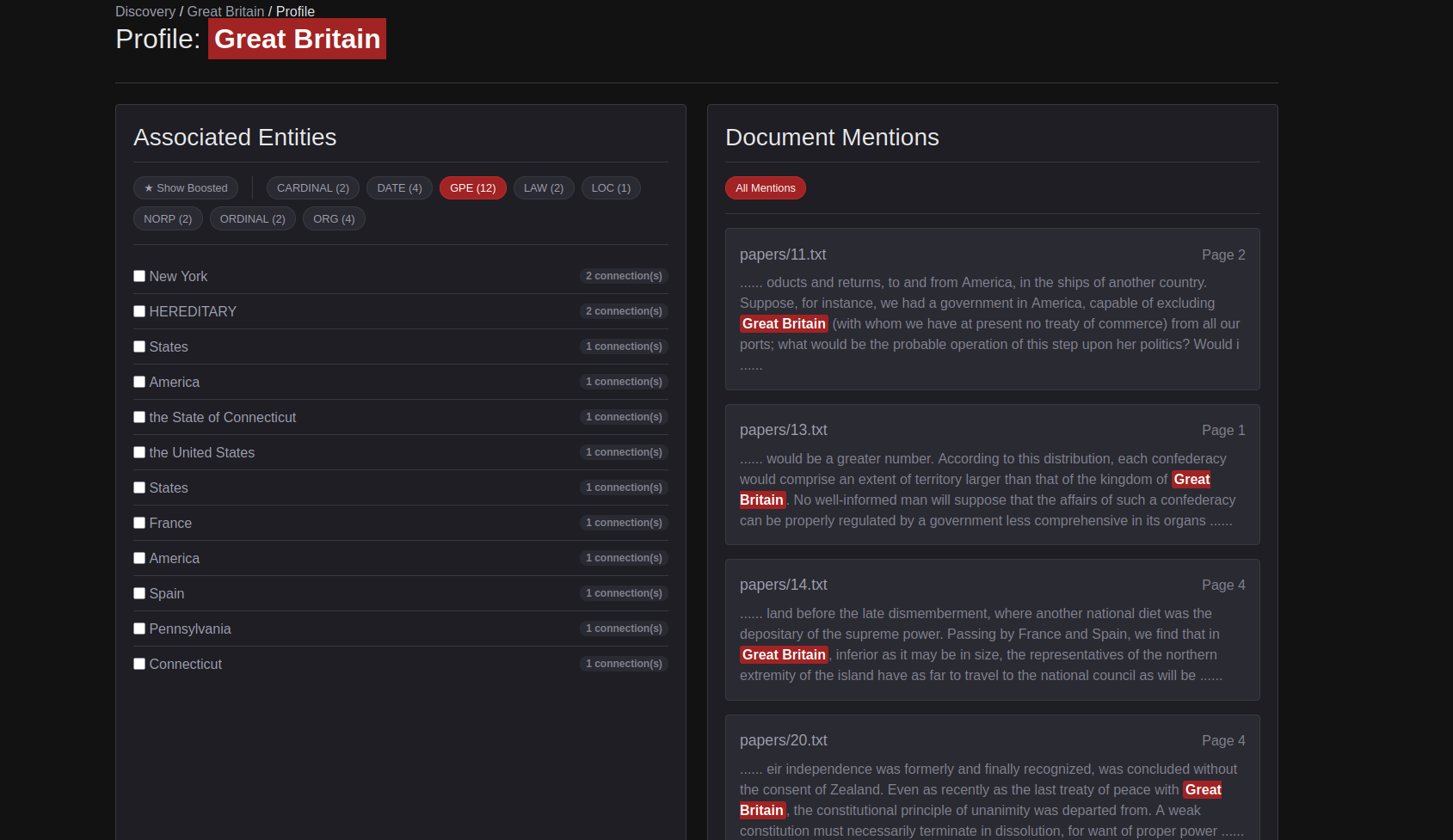

Each entity has an assigned profile where we can boost semantic meaning by emphasizing which signal should be stronger.

Node Leaf extends this idea into a spatial environment.

Instead of interacting with a hidden retrieval pipeline through a chat window, you construct it directly. Document nodes, search nodes, and entity nodes can be arranged, connected, and evaluated in real time. You curate the context yourself. You inspect it. You refine it.

Only after that process, the Human RAG layer is complete do you pass the result into an LLM, such as through an Ollama output node.

This changes the role of AI from an opaque generator into a final step in a visible reasoning process.

When retrieval is hidden, users become dependent on the system and vulnerable to hallucination. When retrieval is exposed—when it becomes visual, spatial, and symmetrical—users remain in control. They think more critically. They engage more deeply.

As I noted in the release of Deep Writer, the goal is not automation.

It is amplification.